Terra-Master F8 SSD - Setup & Configuration

Terra-Master F8 SSD - Fixing Fedora Boot Issues

Having problems getting Fedora, Ubuntu, or any other Linux distro to make use of the NVMe drives? Getting namespace errors? Getting acpi errors?

https://forums.truenas.com/t/terramaster-f8-ssd-plus-truenas-install-log/13867/22

Go into the BIOS->Chipset->North Bridge and change “VT-d” to Disabled, then save BIOS settings and reboot.

Terra-Master F8 - Mobile SSD Linux/ZFS iSCSI Target

Ceph For Media Storage (Big But Slow I/O)

My Ceph cluster at home isn't designed for performance. It's not designed to maximum availability. It's designed for a low cost per TiB while still maintaining usability and decent disk-level redundancy. Here is some recent tuning to help with performance and corruption prevention....

23TiB On CephFS & Growing

Original post on Reddit

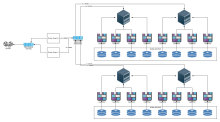

Hardware

Previously I posted about the used 60-bay DAS units I recently acquired and racked. Since then I've figured out the basics of using them and have them up and working.

Tags

Ceph Orch Error When Adding Host

[ceph: root@xxxxxx0 /]# ceph orch host add xxxxxxxxxxx1

Error EINVAL: Traceback (most recent call last):

File "/usr/share/ceph/mgr/mgr_module.py", line 1153, in _handle_command

return self.handle_command(inbuf, cmd)

File "/usr/share/ceph/mgr/orchestrator/_interface.py", line 110, in handle_command

return dispatch[cmd['prefix']].call(self, cmd, inbuf)

File "/usr/share/ceph/mgr/mgr_module.py", line 308, in call

return self.func(mgr, **kwargs)

File "/usr/share/ceph/mgr/orchestrator/_interface.py", line 72, in <lambda>

wrapper_copy = lambda *l_args,Admin Access To NEWISYS NDS-4600-JD

- One IP per mgmt card

- TCP/23

- Basic telnet

- No login

- Can control various backplane options

- Working on finding out how to control backplane mode

- TCP/1138

- Not TLS

- openssl s_client causes it to restart

- Telnet??

- Need to fuzz this

- Not TLS

Tags

Admin Access To NEWISYS NDS-4600-JD

Guess what has telnet enabled and TCP/1138. Telnet is obvious but I'm still working on TCP/1138.